New compression could reduce AI costs

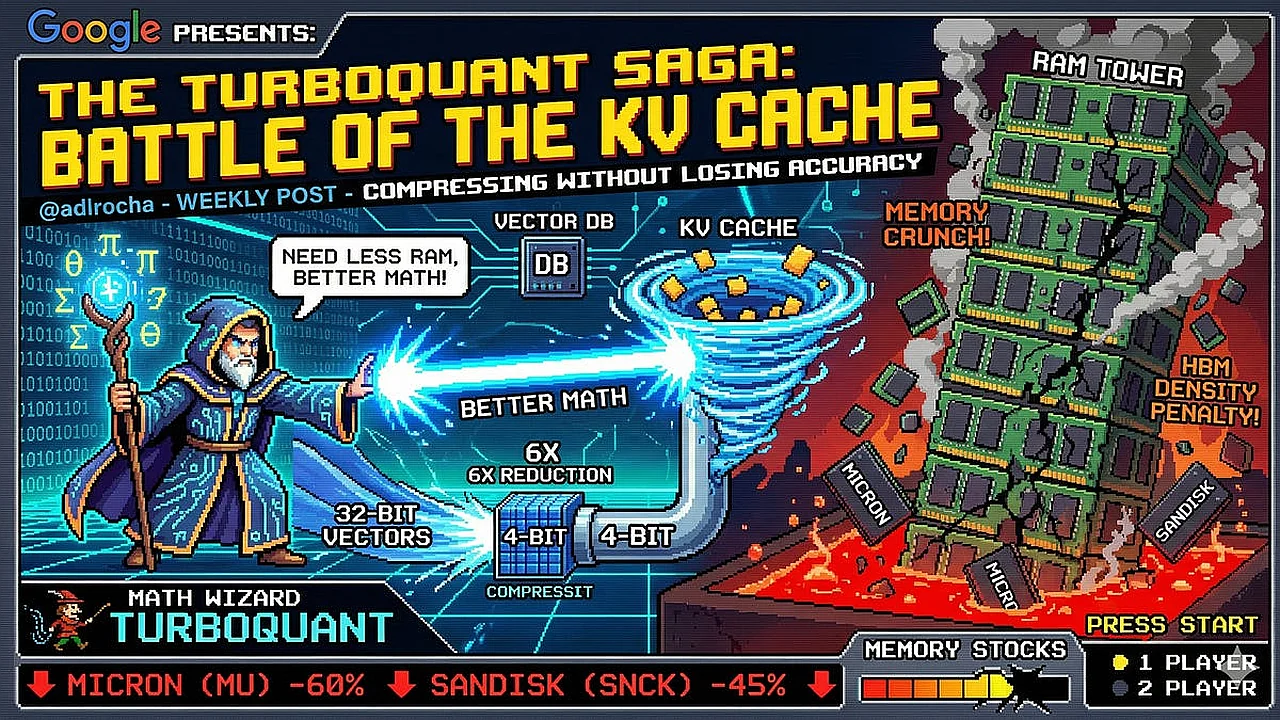

Google has presented TurboQuant, a method aimed at one of artificial intelligence’s biggest hardware problems: memory. Instead of relying only on larger and more expensive chips, the idea is to shrink the data that large language models must keep in memory while they generate text. That could matter for companies building AI systems and for investors watching the memory chip market. Reports Adlrocha.substack.com.

Large language models work by predicting one token at a time and constantly referring back to earlier tokens. To do that efficiently, they store key and value data from previous steps in what is known as a KV cache. This cache helps avoid repeating the same calculations, but it grows with every new token. In long chats, coding sessions, or document analysis tasks, the memory demand can become enormous.

TurboQuant targets this KV cache. According to the source material, the technique compresses the stored vectors without causing a meaningful loss in model accuracy. In simple terms, it tries to keep the benefits of a large memory store while using less physical memory on the GPU. That could improve inference efficiency and reduce pressure on high-bandwidth memory supplies.

If such methods prove effective at scale, they may slightly change the conversation around AI infrastructure. Demand for advanced memory will likely remain strong, but smarter compression could reduce how quickly hardware needs grow. For the industry, that means better software may start solving part of a problem that many expected hardware alone to fix.

Read “Zamin” on Telegram!