DeepSeek introduced V4 model with 1.6 trillion parameters

The Chinese laboratory DeepSeek has announced its latest large language models — the DeepSeek V4 Flash and V4 Pro versions. These models are successors to last year's V3.2 and the popular R1 models, featuring significant architectural improvements. Both models support a context window of up to 1 million tokens, allowing users to work with massive volumes of documents and code. Techcrunch.com reports on this development.

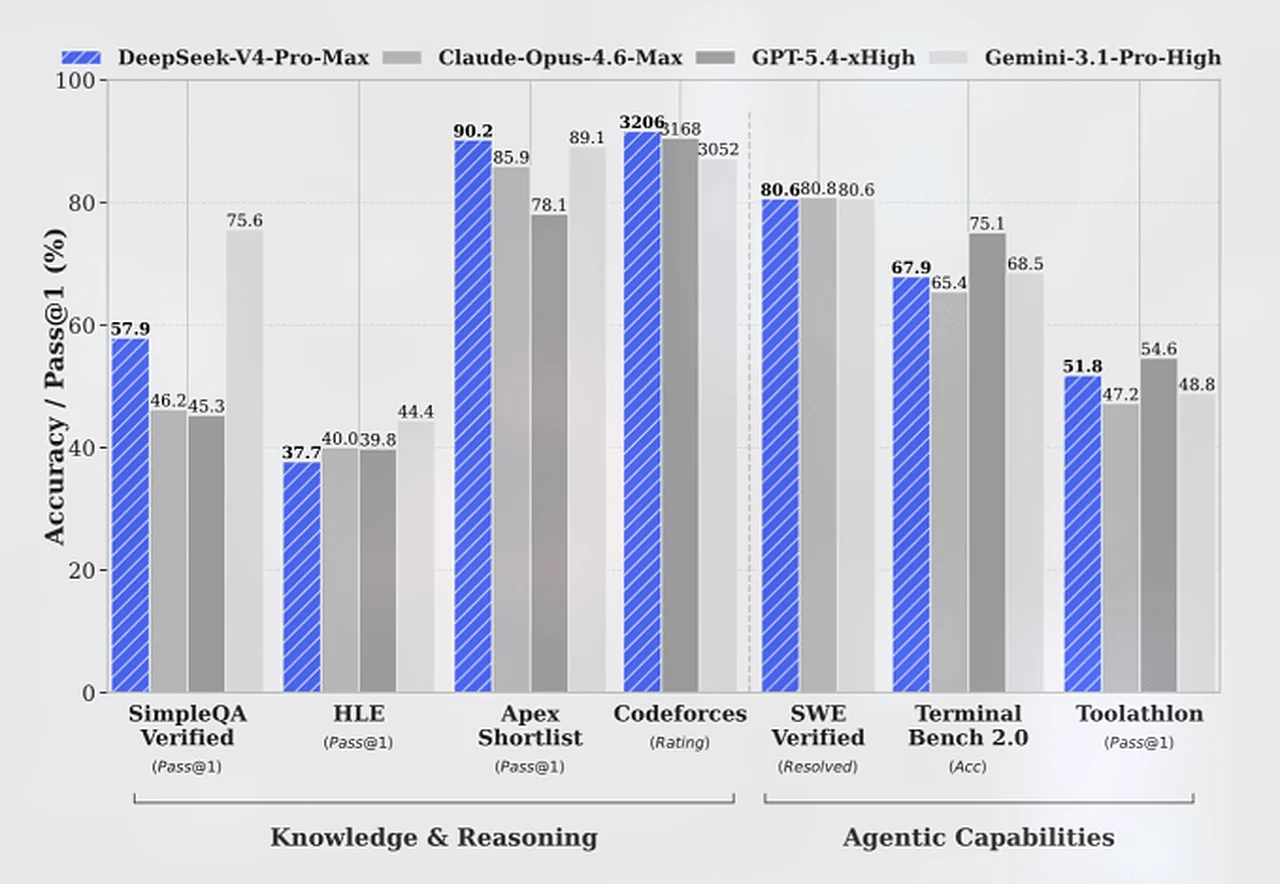

The V4 Pro model boasts a total of 1.6 trillion parameters, making it currently the largest open-weights model in the world. For comparison, it significantly surpasses Moonshot AI's Kimi K 2.6 (1.1 trillion) and DeepSeek's own V3.2 (671 billion). The smaller V4 Flash model features 284 billion parameters. Company representatives stated that the new systems have nearly closed the gap with current leading open and closed models in logical reasoning tests.

According to DeepSeek data, the V4-Pro-Max model outperforms OpenAI's GPT-5.2 and Google's Gemini 3.0 Pro systems in logical tasks. In programming competitions, both new models showed results equal to GPT-5.4. However, it was noted that in tests evaluating knowledge levels, Chinese models still lag behind the most advanced U.S. systems by 3 to 6 months.

The new models are significantly cheaper than their market competitors. For instance, the V4 Flash model costs $0.14 per 1 million input tokens, which is lower than the prices for GPT-5.4 Nano and Gemini 3.1 Flash. It is worth noting that this presentation took place amid U.S. allegations against China regarding intellectual property theft and accusations that DeepSeek copied models from Anthropic and OpenAI.

Read “Zamin” on Telegram!