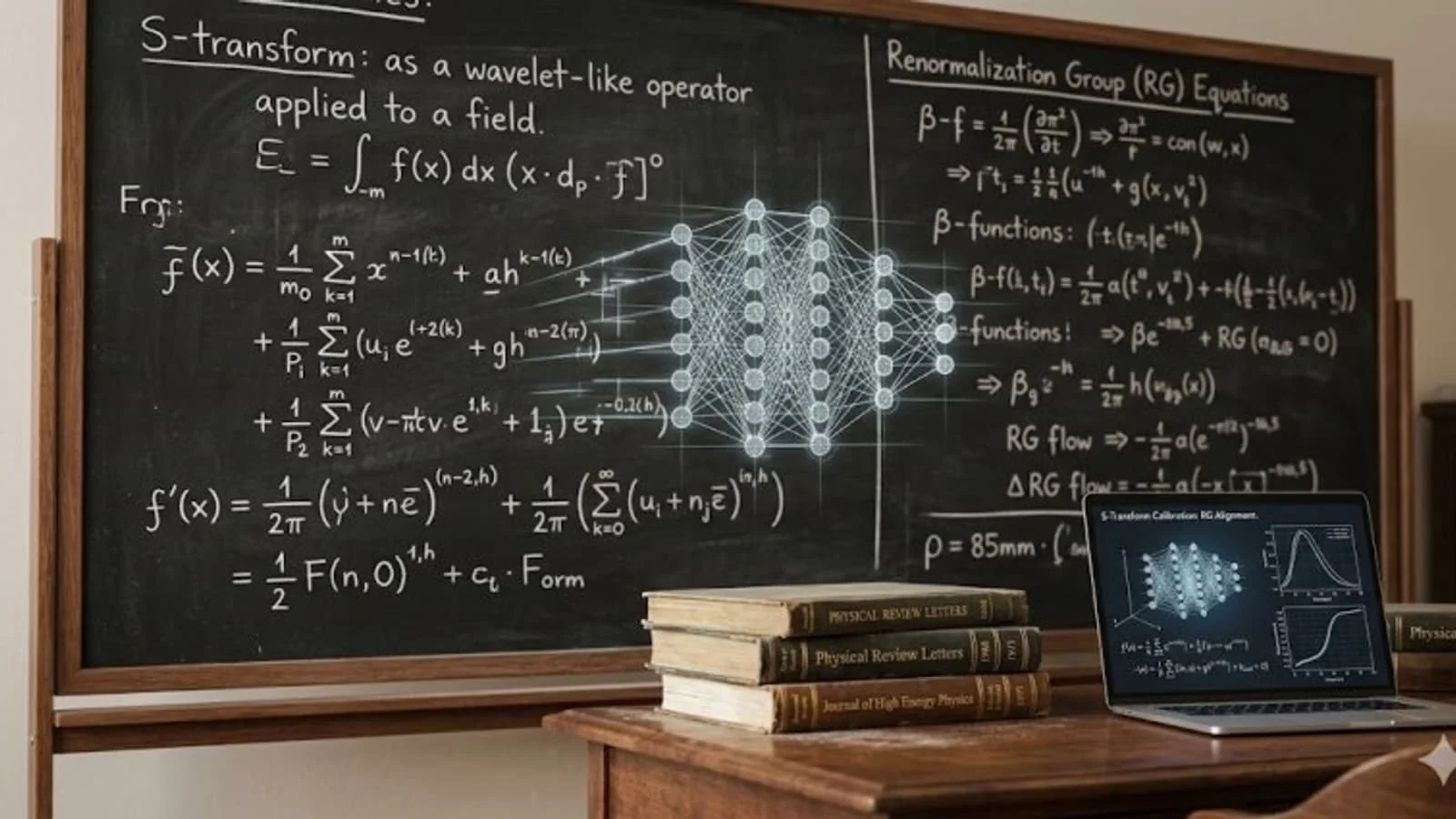

Harvard physicists discover the 'intelligence formula' for artificial intelligence

A group of theoretical physicists at Harvard University has published a paper explaining the mathematical nature of the success of modern neural networks. According to the study, the efficiency of artificial intelligence is not governed by random factors, but by strict physical laws. Scientists compared the process of training neural networks to complex physical systems, proving that 'scaling laws' in machine learning are based on fundamental principles of statistical mechanics. This is reported by Ixbt.com reports.

The core of the study relies on the concept of 'renormalization' derived from quantum field theory. Physicists discovered that statistical noise in data alters model parameters just like quantum fluctuations in elementary particle physics. This process ensures model stability and allows the system to function correctly even when the number of parameters exceeds the training data.

Using a mathematical method called 'S-transformation,' scientists derived equations that determine the relationship between training error and test error. This discovery allows for the evaluation of neural network quality based solely on training data, without expensive testing processes. The study also identifies four distinct regimes in neural network performance, helping engineers turn model creation into a predictable manufacturing process.

One of the most important practical results was the identification of the 'initialization barrier.' Physicists proved that infinitely increasing the size of a neural network is not always effective. Under certain conditions, the randomness of initial parameters 'swallows' the useful signal, making further scaling of the model meaningless. In such cases, alternative approaches like ensemble methods are required to improve accuracy.

The research also shed light on the 'double descent' phenomenon in machine learning. Scientists showed that this is not an anomaly, but a legitimate physical singularity. As the volume of data increases, the model's 'effective parameter' changes, allowing the system to find the simplest and most accurate solutions amidst complexity.

Read “Zamin” on Telegram!